- 3D Printing (6)

- A Bogus Journey (2)

- Defies Categorization (3)

- Energy (18)

- Finance and Economics (3)

- Flying (1)

- Frozen North (1)

- Metablog (5)

- Nerdgasm! (7)

- Optimistic Signs (11)

- Parenting (3)

- Pedal Power (5)

- Politics (1)

Shivering Timbers's blog

Half a Year of Solar (almost)

Fri, 12/26/2014 - 18:04 — Shivering TimbersOur solar panels were activated five months ago, at the end of July. That was a couple months later than we had been hoping, but the modules we ordered were in short supply at the time.

Since then, perhaps the most remarkable thing about living with solar power is just how drama-free the whole thing is. It took a fair amount of effort and planning to get the system installed. But now that it's in place, it just sort of sits there and generates power.

Now that we've had the solar panels in place for almost half a year, here are a few observations in no particular order:

- Probably the coolest part of the whole system is not the solar panels but the power monitoring system. This lets us view, in real time, how much power we're using and how much we're generating. This turns out to be a great motivator to turn off lights when we leave the room and otherwise look for ways to save energy. We have cut our household energy consumption by about 10% just thanks to having this tool.

- The TenK modules are performing as advertised when we have partial shade. One reason for selecting this brand was that the roof over our garage (which is where half the solar panels were installed) gets a lot of dappled shade in the winter months, and TenK modules are designed to be highly shade tolerant. Most solar panels will lose a large fraction of their power output if there's even a small patch of shade, but the TenK modules keep generating under these conditions.

- Speaking of shade....solar panels don't generate much power when it's cloudy, and we're in the middle of the cloudiest stretch of weather in Minneapolis since the 1960's. November and December are normally the cloudiest and darkest months of the year, but we have literally had only two even partly sunny days in the past three weeks.

- However, despite the clouds we have had relatively little snow cover. Since it's impossible to clear the snow off half of the solar panels, persistent snow cover is also pretty bad for our power production.

- Speaking of snow, when the panels are covered in snow and the temperature gets above freezing for a few hours, all the snow and ice tends to slide off in a big clump. From inside the house it sounds like being underneath an avalanche (which is pretty much what it is).

- We've had a few neighbors ask about solar, but it happens that ours is one of the few houses in the neighborhood that's suitable for solar panels. That's the downside to living in an area with a lot of big trees.

Our Solar System Takes Shape

Sat, 04/05/2014 - 13:47 — Shivering TimbersIn the past few months we have finalized the basic design of our solar power installation.

Our system will have two arrays, one over the garage and one on the main part of the house. Each array will have eight 410-watt solar panels from a local manufacturer called TenK. These will feed 12 microinverters made by Altenergy Power Systems. The total nameplate capacity of the system is 6.56kw, but because the two arrays will face different directions it will never produce that much power at any given time. Instead, with a southwest and a southeast array, one will catch more morning sun, and the other will catch more afternoon sun.

The estimate is that this system will produce, on average, about 5,800 kWh per year. This is relatively low production for a system this size in this area, and the lower production is mostly because of partial shading on the arrays, especially in winter. The garage array, in particular, is estimated to produce almost no power in the month of December because the garage roof will be mostly shaded by the rest of the house. That's not such a great loss, though, since Minnesota gets relatively little solar energy in December anyway.

We chose this system because of a very generous incentive program Minnesota is offering for solar panels made in Minnesota. For the first ten years the system is in production, we will get an incentive payment of $0.29/kWh for all the power it produces. This is in addition to the net metering credit which is currently about $0.12/kWh and will increase as electric rates go up. The Made in Minnesota incentive is paid for through a conservation program established several years ago by the state which requires electric utilities to set aside a small percentage of their revenue towards energy conservation programs.

The Made in Minnesota incentive is so generous that we expect this system to pay for itself in under ten years, despite the shading on our site and the slightly more expensive panels from TenK. Our benchmark for making solar worthwhile is that the system pays for itself within its lifetime (25-30 years), so this system meets that threshold by a large margin.

TenK Modules

The TenK solar modules are a new and innovative product, which was another reason I liked this option. Some people might read "new and innovative" to mean "unproven and risky," especially for a major capital investment expected to last decades. For us, however, since one of our goals is to learn and explore solar energy, the chance to work with a product taking a new approach to solar power is definitely a bonus.

Traditionally, solar panels are very dumb devices. The basic solar module consists of a few dozen photovoltaic cells sealed in a weatherproof enclosure and wired together with a couple diodes. In many cases, the panel manufacturer doesn't even make the solar cells, they just buy the components and assemble them into the final package. That's part of the reason why there are so many solar panel manufacturers and it's such a low margin business. There's been fairly little technology in the module itself, and all the magic happens in manufacturing the photovoltaic cells and in the inverters and controllers.

TenK, on the other hand, takes a very different approach. They sell "smart" panels which incorporate the MPPT electronics (which maximizes the harvest of power from the solar cells) into the module itself, and do a DC-to-DC power conversion to control the output of the module.

This allows them to get more power from the system in situations where a traditional module performs poorly (such as when half the module is shaded and the other half is in the sun). It also allows them to use a power bus for connecting the modules to the inverters, which makes it practical to generate a lot of power but keep the DC voltage at or below 60V.

The low voltage DC bus is important because high voltage DC (traditional photovoltaic strings can operate at hundreds of volts) is dangerous and requires special equipment to manage. The TenK modules also have built in ground fault protection, so if there's a short circuit in the power bus the modules shut down automatically.

So (in theory) the TenK "smart" modules should allow us to get more power from our system (especially in December), and while the modules themselves are more expensive, the rest of the installation is simpler. The total system price quoted by our installer for the TenK system was about 10% higher per watt than what we were quoted for a more traditional system built around "dumb" panels, but it's possible we will actually get 10% more power from this system than from a similarly sized array from another manufacturer

The risk, of course, is that TenK goes out of business and our modules break earlier than expected. With a more complex module there's more risk something will go wrong and the system will need to be repaired; and the solar module business is notoriously brutal.

In the near term, TenK seems fairly stable since they very recently raised a substantial amount of money from investors. I spoke to some of the company's early customers and they were all pleased, so I'm comfortable that they will be around to fix any problems which develop in the first few years.

Next Steps

One downside to the TenK modules is that the product is currently in short supply. Our installer advised us that we can expect the modules to be available in June, which is 2-3 months from now. We're hoping that won't get further delayed, since we want to take advantage of the most productive solar months of the year.

In the meanwhile, we're starting on the paperwork for the utility approvals and the solar incentive program, and looking at what work we can get done in advance so that when the solar panels arrive we can get into production as fast as possible.

Energy Storage: Potential Game-Changer for Renewables

Sun, 02/16/2014 - 18:41 — Shivering TimbersSolar power has reached the point where, for ordinary consumers, it's generally about the same price as power from the electric company.

Wind energy has reached the point where, for utilities, it's generally about the same price as generating power from fossil fuels.

Not surprisingly, then, both residential solar and utility wind power are growing very fast in the U.S. I've seen some analysis showing that essentially all the net new generating capacity being built in this country is coming from renewable sources. I don't know how credible this is, but whether it's true or not today, it will be true in the not very distant future.

Solar and wind energy can continue to grow like this for many years, since they still represent a very small portion of our total electric generation. But the growth of renewable energy will eventually be limited by the fact that these energy sources are inherently intermittent. The sun doesn't always shine, and the wind doesn't always blow, and there's no way to control when you get power.

The problem is that electricity needs to be generated at the same time it is consumed. The power grid doesn't store power, it just moves it from one place to another.

Right now, storing electricity is a lot more expensive than generating it. In our neighborhood, it costs about $0.12/kWh to buy power from the electric company. Rechargeable batteries, on the other hand, cost (on the cheap end) around $0.50 for every kWh you use because the battery has a limited number of charge cycles before it needs to be replaced.

Given the cost of storage technology today, it is almost never economical to store excess renewable power for later use, even if the power is free (the only exception is if there are no other power generation options available--for example, a cabin in the woods). That means that, with today's technology, wind and solar power can't supply anything close to the majority of our electrical needs, since the power simply won't be generated at the right time.

An inexpensive way to store excess power for later use would radically change the economics of renewable energy. Lots of smart people are working on this problem, and there are several different approaches which could bear fruit.

Improvements in Battery Technology

Traditional batteries are the simplest way to store electricity for future use, but today's technology is simply too expensive for large quantities of power (except in specialty applications like electric cars). There's a lot of research into novel chemistry, better physical designs (including lots of nanotechnology), refinement of approaches like flow batteries, and so forth.

In order to become economical, there needs to be at least an order of magnitude improvement in the cost of large batteries per lifetime kWh (where the lifetime kWh is the capacity of the battery multiplied by the number of charge cycles before the battery has to be replaced). The good news is that there doesn't seem to be any fundamental limitation to getting there--it's possible to build rechargeable batteries from relatively cheap and abundant raw materials. The bad news is that the cost of battery technology seems to be dropping only relatively slowly, and it will take a long time to cut the price by an order of magnitude without a major breakthrough.

Non-Chemical Energy Storage Media

There have also been a lot of novel energy storage approaches proposed, including:

- Pumping water up a hill and using it to generate hydroelectricity

- Filling giant underground caverns with compressed air

- Using large banks of supercapacitors to store electricity

- Spinning large flywheels

These techniques are certainly able to store energy and make it available on demand. Bringing them up to utility-scale (or even power-a-house scale) is a challenge, though. Pumping water and compressing air are both relatively inefficient and only work in certain geographical locations. Flywheels, compressed air, and supercapacitors have a safety issue, in that if Something Goes Wrong they can release a huge amount of energy uncontrollably fast (that is to say, they can explode). To my knowledge, none of these schemes has made it past small scale pilots, though they sound promising on paper.

Upconverting Excess Electricity to Fuel

One really intriguing approach is to find a chemical process which can be used to produce liquid fuel using electricity, and using the fuel produced to power vehicles or electric generators for times when the renewable power isn't available.

This is attractive for several reasons:

- It turns excess renewable power into a valuable commodity

- It allows renewable power for cars, trucks, and airplanes, where renewable power isn't really an option

- Liquid fuels are easy to store and transport in large quantities, making it possible to use renewable power in times and places where it otherwise wouldn't be available

- Power-to-fuel plants could be turned up or down as needed to absorb the excess electricity

If I had to guess, I would say that this is the approach most likely to win over the very long term (50+ years). There are a lot of people researching ideas in this space, but to my knowledge nobody has come up with something cheap enough at large scale. On the other hand, there are almost an infinite number of chemical possibilities, and the reward for cracking this puzzle will be immense.

Demand Shifting

The simplest and cheapest way to store power for later use is through demand shifting, adjusting when you use power to match when it's most readily available. One of the biggest consumers of power in a typical home is heating and cooling, including not just the home itself but also hot water, refrigerators, air conditioners, and so forth.

Heat (and cool) are fairly easy to store for up to a day or two. For example, thermal storage heaters (which have been available for decades) use off-peak electricity to heat up a pile of bricks, and then blow the heat into the room throughout the day as needed. Similarly, an off-peak hot water system can heat extra hot water when electricity is cheap for use at other time.

Along the same lines, freezers can get extra cold when there's cheap electricity available (so they don't have to run as much at other times), and an air conditioner could chill a pile of bricks or tank of water to make cool air available at other times.

Using tricks like this, it's probably possible to move 75% (or maybe more) of the electrical use of a typical American home to times when renewable power is available. Other appliances (clothes washers, phone chargers, etc.) can be programmed to mostly run when there's solar or wind.

The beauty of this approach is that it requires no new technology, and has the potential to dramatically increase the amount of our power consumption which could be met with solar or wind power. The downside is that it will require changes to almost any electrical device which can be demand-shifted, and a lot more intelligence in our power systems. But those changes can happen gradually.

It's not unreasonable to think that with aggressive demand-shifting and only a modest amount of battery storage (for lights, computers, and entertainment systems), a typical home could be built with solar power and be off-grid for close to the cost of grid power.

Are Utilities Anti-Solar?

Tue, 12/31/2013 - 17:20 — Shivering TimbersThere's been a bunch of news articles recently about power companies coming into conflict with customers who install solar systems. In Hawaii, where solar power is substantially cheaper than the power company and has become very common, Hawaiian Electric Industries (the local utility) has stopped allowing some new solar systems to be connected to the grid. In Arizona, the power company lobbied (unsuccessfully) to start charging $600/year to customers who install solar. This Bloomberg article is a nice summary of what's been going on in both states.

It would be easy to conclude from this that power companies (or at least, the ones in Hawaii and Arizona) are against solar power. I think the reality is a lot more complicated: I think the power companies are not against solar power, but have let themselves get backed into a corner created by their business model, the net-metering laws in the U.S., and politics.

Traditionally, power companies have built and operated all aspects of the electrical system including power generation and distribution. As regulated monopolies (in the U.S.), power companies' prices are generally set by a governmental agency, which allows the utility to earn a specified return on equity. This formula is supposed to compensate the utility for spending the money to build the infrastructure and allow it a fair profit without taking advantage of its monopoly position.

This system mostly works, though it does have a few quirks. Because the utility's profits are based on the total investment, it's in the best interest of the utility to spend a lot of money on infrastructure and minimize operating costs. Buying power from a third party doesn't help the utility at all, since there's no money invested in that generating capacity. However, since most power companies' rates are directly set by the government--which is ultimately answerable to voters, who don't like to see their power bills go up--they have been somewhat restrained from simply building the most gold-plated power system possible.

Net metering has been around for about 30 years (Minnesota passed the first net metering law in 1983), and requires that utilities buy excess power generated by small customers. The details vary from state to state, but in Minnesota the requirement is that the power company pay full retail for the electricity it buys. In other words, your power bill is based on the "net" amount of power you bought from the utility, not the total amount you used.

Net metering was designed to encourage people to install small solar and wind power systems. It's effectively a subsidy for customers who might need to buy electricity at some times, but generate more power than they need at other times. It's a subsidy because retail electric rates combine the costs of both power generation and transmission into the price per kWh, and the net metering customer gets both the generation and the transmission costs netted out even though the customer is still using the grid to buy and sell electricity. Xcel Energy, our local power company, claims that 45% of our electricity costs are for transmission, so the net metering customer is effectively getting paid double the wholesale cost for excess power generated.

The beauty of net metering is that it encourages connecting small power sources to the grid (where the power can be used more efficiently) and appeals to everyone's sense of fairness. In fact, several power companies voluntarily started offering net metering back in the early 1980's before any states had passed laws requiring it. It has proven a very effective incentive for the adoption of solar power once the price of solar starts to get close to the retail price of electricity.

But as the cost of residential solar power has approached (and in some cases dropped below) the retail price of electricity, net metering has started to create problems for utilities. Net metering is only workable for the power company if a very small percentage of customers sell power back to the grid. If too many customers take advantage of net metering, the subsidy can start eating into the power company's profits (though the power companies prefer to say that "it's too expensive for the other customers," as though the net metering subsidy was somehow automatically added to other customers' bills). Too many net metering customers also takes a percentage of the generating capacity out of the control of the power company, which can create some real problems with keeping the grid functioning smoothly. Power grids, as implemented today, are simply not designed to account for thousands of small power plants constantly coming on- and off-line.

This is where the utilities start to get boxed in by the politics of the situation. Net metering is incredibly popular (at least among people who care about the politics of energy). It seems fair to the average consumer because the subsidy is well-hidden. And it is very effective at encouraging solar installations. But because the utilities can foresee a day when net metering and grid-tied solar will start causing them big problems, they want to get ahead of the issue.

Unfortunately, there is very little a power company can do to change net metering laws or put the brakes on solar installations without looking like the big bad bully out to squash the little guy and slow down the future of energy. And since power companies in the U.S. generally don't have the ability to set prices without government approval, it's going to be very hard for them to adjust to the new reality of widespread adoption of solar power.

At the end of the day, I don't think power companies are anti-solar. I think most power companies would be perfectly fine with generating a lot of their electricity from solar power, as long as they controlled the solar power plants and it was cost-competitive. But right now, solar is cost-effective at retail prices, and ordinary consumers are starting to adopt the technology en masse. This costs the power companies a lot of money and takes a lot of control out of their hands, and that's what they oppose.

What we need is a fundamental restructuring of our electricity markets, to create a system which is fair to everyone but still encourages people to invest in their own solar installations. This is something the power companies are going to fight, since it will likely take away a lot of their control and at least some of their profits. But the politics and the economics of the situation are against them long-term.

Solar Engineering

Tue, 12/24/2013 - 16:17 — Shivering TimbersWe've begun the design process for our solar installation and it turns out to be a lot more complicated than I expected.

Solar cells are very simple devices: light shines on them, they produce a voltage, and you get power. So you would think that designing a solar system would mostly be a matter of deciding how many solar panels you want and where to put them, then plugging a bunch of cables together to hook it all up. Unfortunately, the solar industry is a long way away from that plug-and-play world.

Solar Modules

An individual solar cell is a few inches across and produces just a few watts of power at about 0.5 volts. Even a modest residential solar system will have over a thousand solar cells. To make everyone's life easier, a few dozen solar cells are packaged together in a weatherproof frame with a glass cover to make a module. The module wires together all the solar cells in series so that the module outputs anywhere from 80 to 350 watts at a respectable voltage.

For all the technology that goes into manufacturing solar cells, the module itself is pretty dumb. Nearly all modules just passively wire the cells together and output the resulting power as DC current on a pair of wires. As a result, the voltage and power output of a solar module will vary depending on many different factors, including the amount of light hitting the module, the temperature, and the resistance (load) of the attached circuit.

In order to get the best possible power output from a solar module, the load on the module needs to be constantly adjusted to maintain the optimal current and voltage. This is called Maximum Power Point Tracking, and it's usually the job of the inverter or a specialized device called a DC optimizer.

Inverters

The output of a solar module is not directly usable for most electrical needs. The module produces DC power at a voltage which varies constantly, and most electrical stuff needs AC power at a stable voltage (usually 110V in the U.S.). To make the solar power usable, you need an inverter to convert the DC to AC. The inverter is where most of the intelligence of the system lives: in addition to converting DC to AC power, the inverter will track the Maximum Power Point to optimize the output of the solar modules and monitor the health and output of the system.

If your solar system is connected to the electric grid (as most residential systems are these days), the inverter is the interface between the solar panels and the grid. The inverter will make sure the phase and frequency of your AC power matches the grid, and also shut off the solar if there's a power outage. Shutting off the solar in an outage is important because otherwise your solar system would be feeding power into a dead power grid, with the risk of electrocuting power line workers trying to repair the outage. Unfortunately, that means you can't use solar as backup power (without a fair amount of extra equipment and expense to provide the needed power isolation).

String Inverters vs. Microinverters

Traditionally, a series of solar modules would have their DC outputs wired together and brought into a single centralized inverter. The problem with this is that at any given moment, different modules in the string might have different maximum power points. For example, one module might be partly shaded while the others are in full sun. Or slight differences in manufacturing can lead to slightly different power outputs on the modules.

Since the inverter has to hold a single voltage and load for the entire string, this configuration will always cause some modules to produce less than their maximum power. You also have to make sure all modules in a string are the same model from the same manufacturer--no mixing and matching whatever is cheapest this week. However, since power inverters have traditionally been big and expensive, there's been no economical alternative.

In the past few years there's been a new approach. Instead of a string of modules connected to a central inverter, each module gets its own microinverter physically connected to the backside of the module. The AC output of the microinverters is connected together and wired into the grid.

As the name implies, each microinverter is small, with a capacity of a couple hundred watts instead of the kilowatts more typical for a string inverter. The price for a bunch of microinverters is in the same ballpark as the price for a single string inverter of similar capacity (or anyway, our solar contracter is charging us the same system price whether we go with microinverters or string inverters), and having the modules output grid-ready AC power simplifies some of the design and installation.

The big advantage of microinverters is that it allows each individual module to be held at its own maximum power point, yielding more power from the system as a whole. The manufacturer claims an increase of up to 10% total output over the course of the year, though that depends a lot on the details of the system. Microinverters help more when you have different shade conditions on different parts of the solar array, since a single shaded module in a string can pull down the power output of the whole string.

The biggest disadvantage of microinverters seems to be that there's only one major supplier of them, and because they're a relatively new product, some installers are not comfortable with them yet. String inverters have been in the field for decades and perform well, but microinverters only have a few years of field experience. My solar installer describes them as a bit on the cutting edge, since he's not yet confident they'll perform for the entire 30-year life of the system. For us, though, microinverters make a lot of sense because we will have significant shade issues in some corners of our array.

Mechanical

The solar modules have to be physically attached to whatever surface they will be mounted on (in our case, the roof). This attachment has to be strong enough to hold the weight of the system and keep it from blowing off in a storm. It has to be weathertight so the roof doesn't leak where the solar array is attached. And, ideally, it should be easy enough to install that the labor costs don't get out of hand.

One mechanical problem we won't have in Minnesota is making sure the roof is strong enough to support the weight of the solar arrays. Since our roofs are designed to hold a significant weight of snow and ice in the winter, any roof which was built to code should be strong enough for solar. I'm told this is not always the case in more southerly climates.

It's All Custom

Because every system is a unique combination of modules, inverters, and mechanical components, there's a fair amount of custom design for each installation. There's no question this drives costs up. One would think the industry would move towards "smart" modules with integrated microinverters, standardized connections, and a plug-and-play approach. The closest I've seen is Solarpod, which sells a "system in a box." Solarpod still relies on third-party modules and microinverters (as near as I can tell), rather than an integrated smart module.

Part of the problem is that electrical components which will be connected to the power grid need to be certified for safety, and the certification process is apparently slow and expensive. So where there are hundreds of different solar modules from dozens of manufacturers, and new modules coming on the market all the time, there are fewer companies making inverters (and only one major supplier of microinverters). And since the module manufacturers don't want to be slowed down by the certification process, it looks like for the foreseeable future we will be stuck with dumb modules and separate inverters.

I think the industry recognizes this as a problem. Many people in the solar business have spoken about driving the installed price of a complete solar system under $1/watt. That represents about a 65% to 75% decrease from today's prices. The solar modules are only around a quarter of the total system cost, so there needs to be a lot less expense in inverters, mounting, and installation labor. All that will require a less customized, more integrated approach than we have today.

(Thanks to Charlie Pickard of Aladdin Solar, who has been exceptionally patient with me in answering all my dumb questions.)

Snowblind

Sun, 12/15/2013 - 15:52 — Shivering TimbersThe coldest days in Minnesota also tend to be the sunniest. Those blasts of air from the North Pole bring exceptionally clear weather, with the intensity of the sun making up (somewhat) for the shortness of the days.

Given that, and knowing that solar cells are more efficient when they're cold, you would think that winter in Minnesota would be pretty good for solar production. And it would be except for the snow. A few inches of snow on the solar panels will bring the production to zero, and this time of year we also usually have at least a few inches of snow on the roof pretty much all the time.

There are a lot of solar installations where you can go online and view the production data. This seems to be a common feature of solar monitoring systems, and many people make their systems public--it's sort of a social networking thing for energy nerds. It's cool because you can go online and find solar installations near you and see how much power people are generating.

It's also a little depressing, though, when the weather is intensely sunny but the nearby solar systems are completely dark. We had our first major snowstorm of the year about ten days ago, followed by an extended period of bright sunshine and extremely cold weather. All these snow-covered solar panels are losing a lot of power! I understand that November and December are the darkest months of the year, and the solar contractors take snow cover into account when calculating how much power you should expect to generate.

Nevertheless, it somehow feels wrong to let that much sun go to waste, even though we shouldn't be expecting to generate much power this time of year, and it's dangerous to get up on the roof to clean the panels.

So I've been thinking about ways to safely and cheaply remove snow from solar panels. The "cheap" is important because removing snow from the solar panels will probably only give an extra 10% generation over the year. It's not worth it to spend a lot of money for that amount of gain.

There are three basic approaches to removing snow and ice from a surface: mechanical, chemical, and thermal. It's not necessary to completely clean the solar panels, just get enough snow off so the dark surface can start absorbing light. The heat of the sun will do the rest--even with the most efficient solar panels, over half the sun's energy goes to heat the panel and not generate electricity. It might be good enough to leave up to an inch of snow and ice on the panels, if the intense sunlight defrosts the rest quickly enough. The slope of the roof and the slipperiness of the glass surface mean that if you can break the adhesion between the snow and the solar panel, it should mostly just tubmle off.

- Mechanical: Physically removing the snow from the panels is the simplest method, and could be as easy as brushing it off with a roof rake. That could work for the part of our system over the garage, which is relatively close to the ground, but the panels on the roof of the house will be three stories above ground level. Climbing up on a snow- and ice-covered roof is dangerous, so a useful mechanical system has to be something automated. I've seen systems with motorized pushers which move across the solar array, and they definitely work but are too expensive.

- Chemical: Spraying some sort of deicing fluid on the solar panels should break the bond between the snow and the glass and allow the snow to fall off. The problem here is finding an effective antifreeze which will be safe for both the solar panels and the environment. Salt is a bad idea, since you've got electrical systems involved. Hot water is also a bad idea, since it will freeze in the tubes and make it a one-use system. An alcohol-based system might be environmentally safe, but alcohol can be corrosive to a lot of stuff and it's not clear if it would damage the solar equipment. Sugar water should be safe for the equipment and the environment, but isn't that great as an antifreeze. Propylene glycol should be safe for the equipment, but maybe not the environment. And so on.

- Thermal: Heating the solar modules would certainly work and be environmentally safe. The problem is that it takes a lot of energy to melt snow and ice, and it's possible that it could take more energy to shed the snow than you would generate. Partly it comes down to whether you need to melt all the snow, or just a little bit to make it slide off. Also, this kind of defroster system would probably have to be built into the solar modules at the factory and bonded to the glass, so it's not something you could easily add on after the fact.

So for now, there's no obvious solution to snow on the solar panels other than the one which the professionals advise: wait for spring.

But at some point someone may invent a clever way to clean the solar array which is cheap, safe, and effective. When that happens, I'm guessing it will sell like crazy in these northern climates, just so we can avoid the heartbreak of seeing all those photons go to waste.

Our Solar Adventure Begins

Wed, 12/11/2013 - 20:07 — Shivering TimbersThis week we signed a contract with a solar contractor to install a photovoltaic system on our home in 2014. Ideally it will be operational sometime in the spring, to take advantage of the peak generation through the summer.

Details are still being worked out, but right now the plan is to install 20 modules with a total capacity of 5.4kW. Half of these will be over the garage (facing Southeast), and half over the house (facing Southwest). This is not optimal placement, but it's not too bad. We expect this system will produce around 4,700 kWh/year of electricity, which is enough to offset about half our power consumption.

Our financial projection is that the system will break even after 15 years, and over the 30-year lifetime of the system it will generate an internal rate of return of about 5.7%. That return includes all the current solar incentives, namely the 30% federal tax credit and a production credit from Excel Energy expected to be $0.08/kWh for the first ten years.

It's worth noting that even without the incentive payments--which are substantial--the system would still generate a positive return over its 30-year life. In this scenario, our system would have a 30-year IRR of only about 1.9%. That's not really worth doing on a strictly financial basis (though I think it's still worth it for the environmental benefits); however, it's close enough that solar will pay for itself for people who pay a little more for electricity than we do, or who have somewhat better conditions for generating power.

The next steps will be to finalize the design and submit it to Xcel Energy for approval to participate in their solar program. Then we will be ready to install the system once the snow is off our roof in the spring. If all goes well, we could be starting work in April and be online shortly after.

The Solar Revolution is Here

Sun, 09/15/2013 - 14:36 — Shivering TimbersBack in 2007 and 2008, I wrote a series of articles about the potential for solar power to become an important source of electricity. Those articles are hard to find today, because I switched blogging software and they aren't indexed well. But for reference, they were:

- July 2007: The Solar Equation, where I discussed the (then) cost of solar systems. At $7 to $11 per watt of installed capacity and the cost of grid power averaging $0.093/kWh for me, at the time solar was far more expensive than grid power. Since solar costs a lot upfront but provides free power for decades, I compared the interest expense on a long-term loan to the money saved from not paying the power company.

- July 2007: Dreaming of a Solar Future, talking about the impact of an order of magnitude price drop for solar power.

- July 2007: Solar Approaches, a discussion of several then-promising technologies to drive an order of magnitude price improvement for solar power, and what that would mean.

- December 2007: Installation Costs, where I wrote about the startup Nanosolar and its claim to be able to manufacture thin-film solar panels for under $1/watt. In my article, I wrote that installation costs would also have to come down a lot in order to solar to become economically competitive. Nanosolar recently went out of business, not because the technology failed, but because the price on traditional silicon solar panels dropped below where Nanosolar could compete.

- April 2008: The Magic Year, in which I looked at the historical price trends in solar power and predicted that sometime around 2015 solar power would be roughly the same cost as grid power over the life of the system (for me, here in Minnesota).

A lot has changed in the past five years since I last visited this issue. It's time to take another look at solar and see where we are--though the title of this article is something of a spoiler.

Bottom Line: The Solar Revolution is Here

The total amount of solar power generated in the U.S. more than doubled in 2012 from 2011, and 2013 is on track to more than double again (source: US Department of Energy). The average solar power installation in the U.S. was $3.05/watt of capacity, and the cost of solar modules has dropped 60% in a year (source: Solar Energy Industries Association). For my home, I was quoted a (nonbinding) price range of $3.15 to $3.50/watt for a complete system, depending on the size.

At that price and current mortgage interest rates of 4.75%, a new solar system on my home would cost almost exactly the same as the interest on the loan to finance it. If you assume any inflation at all, the system will more than pay for itself over its lifetime, including the cost of financing. Solar power has a lot of nonfinancial benefits, including reduced greenhouse emissions and lower pollution, so anything close to price parity for solar is a very attractive proposition overall.

With solar now the same price as grid power or cheaper, and actual solar generation exploding, I think it's fair to say that the solar revolution has arrived. Solar may still be below most people's radar, but the economic, environmental, and social forces are pretty much overwhelming at this point. Within a few years, it will be obvious to everyone that our electric system is quickly and dramatically shifting to where a huge fraction of our energy needs are being met by solar panels distributed across millions of homes and commercial buildings.

What's Changed since 2008?

I originally pegged 2015 as the year when solar would be the same price as grid power in Minnesota. It looks like I was off by a couple years, and 2013 is the real year. Still, I think that's a pretty good prediction for being six years in advance, and made when a lot of people were asking "whether" solar power would be cheaper and not "when."

What's different today? A bunch of details all conspired to make solar get cheaper faster than I expected:

- A bloody price war over the past few years where the price of solar modules dropped to under $1/watt. Even though solar has been on a long-term trend of dropping prices, I don't think anyone predicted this dramatic of a change. Some accuse the Chinese of dumping modules to drive other countries off the market--I'm not so sure. The refined silicon used in silicon cells has gone from shortage to surplus in that time, and the most prominent companies which failed were betting on alternative technologies.

- Long-term interest rates are astonishingly low. The relevant interest rate is a 30-year fixed mortgage, which is how a typical homeowner is likely to borrow the cost of the system. It also happens that 30 years is about the expected lifetime of a solar installation. I don't think anyone would have predicted 4.75% mortgages in 2013; I used 7% in my estimates in 2007. Lower interest rates make any long-term capital investment cheaper, and so they make a solar system effectively cheaper.

- Grid power has become more expensive. We are now paying about $0.12/kWh for electricity here (including taxes and fees), which is up a lot from $0.093/kWh in 2007. That's about 4.3% annual inflation for electricity, substantially higher than the 1% to 2% inflation since the financial crisis started in 2008. If our power bill had tracked inflation, we would be paying about $0.10/kWh today.

How Will Power Companies Deal?

This explosion in distributed solar power is going to radically change how power companies work. As long as solar is a small piece of the total energy pie, they can manage. But when it hits 5%, 10%, 25%, things will have to change. It's not unrealistic to expect that distributed solar generation could be approaching 25% of power generation by 2020--only seven years from now. Indeed, if solar generation continues to double every year, it'll blow through 25% by 2018--but as the installed base of solar grows, the percentage growth rate will slow.

One of the first things to go will have to be the current net-metering schemes. Under these plans, solar generators can run their meters backwards, getting paid for the power they put on the grid. In Minnesota, small generators get paid full retail. Since the power company has a lot of fixed costs baked into the retail rate, if there's too much net metering going on the power company is guaranteed to lose money. So we will probably see something like a retail/wholesale model where power companies pay only a penny or two per kWh (comparable to their fuel costs in a coal or gas plant) for power put onto the grid. Or we may see power bills changed to have a large, fixed monthly fee for access to the grid, and lower prices per kWh. We are already seeing some noise from the utilities that they need to change net metering laws.

Another change will be the inversion of peak hours. During sunny days, there could be so much power going onto the grid that it actually offsets all the use from air conditioners, leaving the power company with a surplus of power. Night time will be the new peak hour, as all the solar goes offline. This will really mess up power companies' long-term planning (remember, these guys forecast and plan decades in advance).

Finally, if anyone comes up with a cost-effective utility-scale way to store electricity, we could see the power companies go from being in the generation and transmission business to being in the storage business. Imagine giant banks of batteries, big enough to power a whole city for days at a time, and you have a picture of what the power company of the future might be.

Practical Considerations

I've been starting to get serious about researching solar power for my home. Serious as in identifying contractors, getting some cost estimates and site selection, and looking into the nuts and bolts.

My biggest concern was that the roof on our house isn't ideally aligned. Instead of facing South, our roof faces Southwest. It turns out, though, that this won't cost us too much in generation capacity: according to NREL's PVwatts calculator (an excellent resource), we lose less than 10% of the power output by not having a perfectly South-facing roof. That's because over the course of the day and the year the sun is all over the sky, and any fixed solar panel will produce about a third less power than one which tracks the sun. So being a little off from ideal doesn't average out to all that much less power.

We have a fair amount of unshaded area on our house for solar panels, both on the roof and the outside wall facing Southwest. So we could install a fairly large system. No worries there. I expect that Excel Energy will be lobbying hard in 3-5 years to dramatically cut back the net metering laws in Minnesota, so I don't want to install an oversized system designed to make a profit--that strategy probably won't work. Instead, it's probably best to try to offset our own peak usage for now, and leave room for expansion as solar prices drop and it becomes clearer what regulatory changes might come along in 5-10 years.

Right now I'm thinking we'll install solar in 2014 or 2015. It's not clear that we'll see more short-term price drops after the huge declines in the past two years, so there may not be much advantage waiting an extra year.

Hyperloop: Terrifying Thrill Ride, or Serious Transportation Proposal?

Wed, 08/14/2013 - 12:18 — Shivering TimbersAfter a couple months of teasing and putting the "hype" in "Hyperloop," Elon Musk recently took the wraps off his proposed ultra-high-speed transit system. The proposal is to put a pair of pipes between Los Angeles and San Francisco, pump out 90% of the air, and shoot capsules through at 700 MPH. Musk's claim is that the system would cost $6 billion, take 30 minutes to make a one-way trip, and could be built in a decade or so.

I was disappointed to read the "analysis," which struck me as very superficial for the dramatic claims being made. The cost estimate, in particular, seems wildly off-base: I don't see what would enable Hyperloop to be built as an elevated system for less than a tenth the cost of a ground-level bullet train using proven technology. It seems fair to assume that where the bullet train has gone through detailed engineering design and cost analysis, the Hyperloop cost is (at best) a back-of-the-envelope calculation using some crazy optimistic assumptions.

There are other problems with this proposal (and I'll summarize some at the end of this article), but the biggest flaw, in my mind, is that there seems to be zero consideration for passenger comfort.

The passenger capsule would seat 28 people in a 2x14 configuration. The exterior cross-section of the capsule is six feet high by a little over four feet wide (the interior cross section isn't specified, but is probably no greater than four feet by four feet). Assuming that there's no interior aisle, that gives you a little wider seat than in economy-class on an airplane, but no ability to get up and move around once the capsule is closed. There's no bathroom on board (presumably you're expected to hold it for the 30-minute trip), and presumably no cabin attendant since a crew member wouldn't be able to get back to a passenger to help with anything.

Once underway, the capsule would be subjected to up to 1g of acceleration and deceleration, and 0.5g of acceleration around corners (which passengers would feel as about 1.1g of vertical acceleration as the capsule banks). The lateral acceleration is significantly more than you would feel in a commercial airliner, and is more like what you would experience in a thrill ride. The vertical acceleration is within the 0.75g - 1.25g envelope of a typical airline flight. However, the proposed route appears to go directly from straight to a full 0.5g turn, so passengers would experience a sudden "snap" as the capsule banked and accelerated into and out of a turn. More gentle entry and exit from turns would eliminate this problem, but also impose significant new route constraints which would almost certainly drive up the cost.

Furthermore, the capsules would have no windows and be traveling through steel tubes, so passengers would have no way to see out. While Hyperloop promises that every seat will have an entertainment system, many people will be very uncomfortable if they can't see outside. Claustrophobia aside, subjecting someone to significant accelerations and rotational forces while removing any external visual reference is also an efficient way to induce motion sickness. Given that, windows and a transparent tube seem like necessities not frills. I don't know how much that would add to the cost, but it doesn't seem like a small number.

So the Hyperloop experience would be something like this: you board at the departure station and get strapped in, and the station crew closes the door and seals you in with 27 other people in fairly tight quarters. Once the door is closed, you cannot get up and move around until arrival, and there is no cabin attendant. You can't see anything outside the capsule (though your entertainment system may show you a virtual landscape whizzing by), and you will feel a series of fairly strong accelerations and sudden banking motions, comparable to a roller coaster. There will be fairly constant noise and vibrations (similar to an airliner) from the compressor driving the air bearings. If anyone gets sick (which seems likely), there will be no opportunity to clean up or rearrange until arrival.

And that's if everything goes well. If there's an emergency, your capsule will be brought to an unexpected stop, and someone will tell you over the radio to stay calm and don't get up. You will be stuck in the capsule (there's not enough air to breathe in the tube) until it can be brought to an emergency egress station.

That might be fun an amusement park, but not for a transit system.

Other Issues with Hyperloop

Other commentators have raised a number of other concerns with Hyperloop. Sorry for the lack of linkage, but here's a summary of what I've read elsewhere:

- The cost estimates seem wildly low compared to the real-world costs for similar types of infrastructure projects. Musk claims that elevating the pipes on pylons will be cheaper than building the system at ground-level, but in the real world elevated systems are always much more expensive.

- Hyperloop, as proposed, would have a capacity substantially less than the California bullet train in terms of passengers per hour. The small (28 passenger) Hyperloop capsules would need to run unrealistically frequently in order to get any reasonable system capacity as compared to a bullet train which can carry over 1,000 passengers.

- Musk's proposal only considers the initial capital expense of building the system, and does not look at any of the ongoing operational expense and maintenance. Even if Hyperloop is cheaper to build than a bullet train (a dubious claim), it may be more expensive to operate.

- There's almost no consideration of failure modes and what to do in an emergency in Hyperloop. Musk claims that the system would be impervious to weather, seismic events, etc., but there seems to be no allowance for mechanical failure, onboard fire, power failure in a capsule, etc. The atmosphere inside the Hyperloop tube would be considerably thinner than outside an airliner, meaning that exiting the capsule outside a station is not an option, and any pressurization failure is much more serious.

State of Home 3D Printing (Summer 2013)

Fri, 06/28/2013 - 19:48 — Shivering TimbersThe home 3D printing market has changed a lot since I wrote about the state of the market last fall.

Consumables: Cheaper and Easier to Get

Maybe not the most dramatic change, but one which makes a big difference is that it has gotten a lot easier to find high quality, inexpensive filament for home 3D printers. At the beginning of 2012, when I first got started, there were only a handful of suppliers. "Out of Stock" was the order of the day (many sellers were perpetually out of more than half their colors), and when you could get plastic it was often poorly extruded and jammed frequently.

All that has changed. There are now dozens of places to buy filament online (including Amazon), and quality control has become much better. I'm also seeing prices come down a lot: from $60/kg in early 2012 (including shipping) to where it's easy to find reputable plastic for $35-$40/kg today. If you're willing to buy in bulk, you can cut that down to under $20/kg, though specialty plastic like glow in the dark is going to be a little more expensive.

There are also some interesting new materials on the market now, like flexible plastic, color-changing plastic, and even "wood." Some of these are challenging to print well, but they give the serious hobbyist some fun options that not even the commercial-grade printers have.

Stratasys Buys Makerbot

Just in the past few weeks it was announced that Stratasys, an 800-lb gorilla of the commercial 3D printer market, is buying Makerbot, the 800-lb gorilla of the hobby 3D printer market.

This acquisition makes a lot of sense for Stratasys, since the hobby market poses a clear competitive threat to the high-end commercial machines. Many hobbyist-grade printers can do nearly everything a Stratasys machine can do, and at a tenth (or less) the cost. The main difference is that the commercial machines are generally more reliable and easier to use. A significant segment of the market will be willing to put up with the quirks of a hobbyist-grade machine for the cost savings, and the usability gap has been closing fast.

I see this acquisition possibly going either of two ways. The more exciting outcome will be if Stratasys really commits to nurturing the hobbyist market. Stratasys has a lot of technology and a patent portfolio which could be deployed to significantly improve the Makerbot printers without a significant price increase. If Stratasys does this, they will likely own the hobbyist market for many years to come.

The more depressing alternative will happen if Stratasys tries to defend its high-end printer business, and winds up crippling Makerbot. This sort of thing happens all too often when a large established company buys a smaller upstart rival. The larger company doesn't want to risk undercutting its existing business, so it carefully segments the market and doesn't allow the acquired company to develop products which might threaten the established business. Eventually, the innovation which drove the smaller company to its initial success gets strangled by the needs of the larger parent company.

It will probably be a couple years before we know how this acquisition shakes out. I really want to see it succeed, but I don't think the odds are in Makerbot's favor. It's just too hard to escape the big-company logic of not undermining your own products.

So, Have You Printed a Gun?

One of the unfortunate side-effects of the massive hype over 3D printing is that you get people trying to ride the wave to promote their own agenda. For the benefit of future readers (in case this has been mercifully forgotten in a few months), recently someone managed to 3D print a gun and fire it without killing himself. This was intended to make some sort of political point about firearm regulation: I'm a little fuzzy on the details, but it was something along the lines of "if anyone can 3D print a gun, then there's no point in trying to regulate them, so just give up."

Others have made the counter-arguments, which boil down to "anyone with access to basic metalworking tools can already make a gun, so what's your point?" and "why would you bother when it's easier and safer to buy a manufactured gun," and "just because something is easy doesn't mean it should be legal." My own opinion is that this is really just a publicity stunt, and only distracts from the more interesting (and separate) issues surrounding 3D printing and firearm regulation. It clearly is not a demonstration of either a particularly useful application for 3D printing, or responsible gun ownership.

But because it has been in the news so much, the first question I always get is, "So, have you printed a gun?" And it seems to me that if the only thing the average Joe on the street knows about 3D printing is that you can print a gun, that's not a good thing for anyone.

Bitcoin Solves the Wrong Problem

Tue, 04/16/2013 - 21:32 — Shivering TimbersBitcoin has been in the news lately for its amazing run-up over the past year and recent crash. For those who have been living under a rock (or anyone reading this years in the future when Bitcoin has disappeared), Bitcoin is a "virtual currency" created through some clever cryptoanalytic techniques to ensure a limited supply of currency and unique ownership of the bitcoins.

I'm not an expert in these things, but by all accounts Bitcoin does what it claims to do. The total supply of bitcoins is limited and predictable, and ownership of bitcoins is provable and transferrable. Some people are promoting Bitcoin as a replacement for money, and claiming that it can be treated like virtual gold.

But it isn't, and I predict Bitcoin will never achieve anything close to mainstream success as actual money. The fundamental weakness with Bitcoin is that the underlying Bitcoin technology solves the wrong problem. The system uses the model of a physical commodity (like gold) as money, and therefore is engineered to guarantee a very specific and predictable supply of bitcoins.

In the real world, though, one of the most important characteristics of money is that it has a stable and predictable value. It's vitally important that a dollar today is worth very close to what a dollar was worth yesterday, and that we are confident it will be worth pretty much the same tomorrow. But the demand for money can vary widely, depending on whether people want to hold on to their money (like in 2007-2008 when the banks were collapsing) or spend and invest like crazy (like in the dot-com bubble). So the supply of money has to change to match the demand and keep the value stable.

Our financial system has a system for creating and destroying money: through fractional reserve banking, banks create money by making loans, and can destroy money by calling loans in (or simply not making new loans as the old ones are paid off). The system isn't perfect, which is why we have the Federal Reserve and why different currencies change value relative to each other. But on the whole, it's pretty good most of the time.

Bitcoin, though, has no such mechanism built in. With a predetermined bitcoin supply, the value of a bitcoin is guaranteed to fluctuate like crazy as demand changes (and this is, in fact, what has been happening), and that's useless for money. In order to give some flexibility to the bitcoin supply, you have re-introduce fractional reserve banking. That requires an oversight body like the Fed to make sure the banks do the right thing in both boom and bust. In the end, you've basically just re-created the banking system of 1928, which failed in part because the gold standard was not flexible enough to deal with the challenges of the Great Depression.

I do like the idea of having a peer-to-peer currency, where the system creates the right incentives to have a frictionless money with no requirement for central oversight. But the core problem to solve isn't fixing the supply of money, it's democratizing the process of matching money supply to money demand so that the value is stable. I don't have any idea how to do that, but I do know that Bitcoin is entirely the wrong approach.

This Annoys Me Every Time on Star Trek

Tue, 02/05/2013 - 14:57 — Shivering TimbersThere are two verbs which sound very similar but have very different meanings.

To damp means to diminish the activity of something, or to reduce the oscillations of something.

To dampen means to make something slightly wet.

So when Geordi LaForge uses a dampening field to counteract the alien probe's tractor beam (or whatever), he's actually getting it slightly wet. What LeForge really should use is a damping field.

(And yes, I know that the dictionary cross-lists both definitions for both words. To my great consternation, this misuse is now so common it has become acceptable usage.)

State of Home 3D Printing (Fall 2012)

Wed, 10/10/2012 - 14:04 — Shivering TimbersIt's hard to believe, but I've had my 3D printer for nine months. In that time I've printed hundreds of things, a couple dozen of which are my own designs. I've consumed something like 15-20 kilograms of plastic filament (yet still have an inventory of 20 kg in 15 different colors). I've replaced the platform heater on my printer about ten times, the extruder nozzle once, printed a half-dozen other replacement printer parts, and tweaked my printer in four or five ways.

So this seems like a good time to take a few steps back and make some observations about the state of home 3D printing as it stands near the end of 2012.

Home 3D Printing is Still a Hobbyist's Market

The first thing that's very clear is that home 3D printing is very much a hobbyist's market. The actual usefulness of a 3D printer in the home is still very limited, though they definitely have a role in education, architecture, engineering, and other professional settings. Arguably the most immediate economic impact of inexpensive 3D printing is to bring the technology within the reach of professionals who have a real need, but couldn't afford to spend five or six figures for a commercial-grade machine.

The vast majority of home 3D printers on the market today are very much hobbyist-grade. These are not plug-and-play: even the easiest printers require adjustment and maintenance from time to time, and most of them are very temperamental. With some of the kit printers, I've heard it can take longer to get the printer tuned and adjusted than to assemble it in the first place.

Makerbot made a lot of noise at the beginning of the year claiming that its Replicator was designed for the average consumer. Makerbot has a great history of making kits, but I don't think the company really understood what "consumer grade" means. Many reports I've seen are that the Replicator still needs fussing to get consistently good prints, and has some design and engineering defects. Makerbot recently announced the Replicator 2 which addresses some of the most obvious flaws of the Replicator (for example, the wood frame is now steel, which is important in a machine which has to be adjusted to within a tenth of a millimeter), but the Replicator 2 isn't shipping yet. I'm skeptical when Makerbot claims a product is "easy for anyone to use," so we will have to see.

My printer, the Up!, is now being OEMed in the United States by Afinia. I had a chance to meet the Afinia team and tour their facilities a few weeks ago, and I was impressed that they are putting some real design and engineering work into improving the product. The Up! is a good printer and produces very nice looking prints with only modest adjustment, but it also has its flaws. Some of the cables are underdesigned for the amount of heat, stress, and motion they have to handle (hence the ten heater cables I've gone through), and some of the adjustments have to be repeated too often. For the most part, these are solvable problems--they just haven't all been solved yet. Fortunately, the Up/Afinia is a very "hackable" printer, making it easy for other hobbyists to tinker with it and improve the design.

The printer which comes closest to being a true consumer-grade product is the Cube, a low-end product by 3D Systems. From what I've heard, the Cube requires a minimum of fuss (though it does require a little bit of adjustment, and the use of a proprietary "magic glue" platform adhesion material) and produces acceptable output. However, 3D Systems chose to lock the Cube into a proprietary filament cartridge, forcing users to buy consumables at wildly inflated prices. That may be okay for occasional use, but heavy users like me will wind up spending crazy amounts of money on plastic.

(How expensive is the Cube filament? Right now I buy filament in bulk and spend around $20/kg including shipping. Retail pricing ranges from $35/kg to $60/kg depending on the source. 3D Systems charges $50 for a filament cartridge and won't say how much it holds, but it seems to have between 200 and 500 grams. That's $100 to $250/kg, or between 5x and 10x what I pay now. Since I go through about 2kg/month, the Cube would cost me between $160 and $460 extra per month in filament.)

3D Systems is going after a mass-market audience with the Cube, but I think they are at least five years too soon to market. We are still at the point where the only people interested in buying a home 3D printer are hobbyists and hobbyist/professionals--and those people want a machine they can take apart and tinker with. Worse, if the Cube is discontinued and 3D Systems stops supporting it, there is no other place to get filament. This scenario would quickly turn a Cube into a brick.

My Personal Toy Factory

I usually get one of two reactions when I tell people I have a home 3D printer: either "Cool! Where can I get one?" or "What's that good for?"

The hobbyist printer manufacturers have sometimes bent themselves into knots trying to answer the second question: You can make replacement knobs for drawers! Personalized bottle openers!

Get real. Nobody is going to spend upwards of a thousand dollars on a machine just in case they need to replace a ten cent plastic knob someday. And until the manufacturers start posting 3D files for replacement parts you'll have to design your own, which is way more work.

In fact, the most popular "useful" things on Thingiverse are....replacement parts for 3D printers.

But there is one thing a home 3D printer is really good at: making toys. The ABS plastic is cheap, nontoxic and durable, there are thousands of toy designs on Thingiverse for all ages and interests, and you can design your own if you want. I've made toys for my kids, toys for my friends, toys for my kids' friends, birthday presents, you name it. For my nephew's birthday I designed and printed an entire gear toy system just for him (then uploaded it to Thingiverse for the rest of the world to enjoy).

For some reason, "making toys" seems like a trivial application for a high-tech piece of fabrication equipment. But I say: let's embrace it. The toy industry is a $21 billion dollar industry in the United States. There's nothing small or trivial about that.

The great thing about having a Personal Toy Factory is that it lets me get away from the mass-produced cheap plastic junk. It's still made out of cheap plastic, but the toys are now made specifically for the child. There are thousands of ready-made designs to choose from, and I can customize or design my own. If my nephew decides he wants to be a "Space Sheriff," then I can make him a badge with a starship on it and a six-shooter ray gun. Good luck finding that at Toys R Us.

Given that the average American household spends something like $200/year on toys, it's actually not so farfetched that an upper-income family with kids would spend a couple thousand dollars on a machine to custom-build toys for their kids. It's easy to rationalize as useful for school projects--my kids have all used 3D printed stuff for their classes--and the gee-whiz factor would close the sale.

Home 3D Printing is More Art than Science

One of the first discoveries for a new 3D printer owner is that the technology doesn't quite live up to the hype. Many people come in to 3D printing thinking that they can print Anything--that was certainly my thought at first.

And you can print Anything, as long as Anything:

- Isn't too big: most home printers have a fairly limited print volume, and big prints can take a very long time (over a day in some cases).

- Isn't too small: small parts and fine details might not print at all.

- Doesn't have to be too strong: 3D printed parts can be quite strong if they are carefully designed and printed, but they can also be very delicate since the bond between layers is relatively weak.

- Doesn't have intricate overhangs or interior voids: support material can be a difficult or impossible to remove without damaging the print.

Hobbyists also struggle with making sure prints stay attached to the print bed while printing (yet come off easily when done), and keeping prints from warping or splitting due to internal stresses. Commercial printers have solved these problems using techniques like carefully temperature-controlled chambers and disposable print platforms, but (for now) those techniques are proprietary and too expensive for the home market.

So there is definitely an art to getting good 3D prints from a home printer. You can't just throw any old 3D object at it and expect good results, and many hobbyists are still experimenting with new techniques.

There's also a lot of artistry in designing multi-piece things that fit well and have a "finished" look without too much cleanup work. Since 3D printed models tend to warp a bit, you can't just glue two parts together on a flat side without a visible seam. Joints need to be incorporated into the design, with allowances for gaps and other imperfections in the printing process.

More Fun Than....

I've gotten a crazy amount of enjoyment and satisfaction out of my printer. For me personally, it requires just about the right combination of tinkering and design to give me a perfect cocktail of artistry, technique, and instant gratification.

When someone asks me if he (it's usually a he) should get a 3D printer, my response is usually a little more guarded. This isn't for everyone yet, and it isn't even for most people. But if you are the kind of person who both enjoys working with mechanical stuff and also creative design, you will probably get a lot out of a 3D printer.

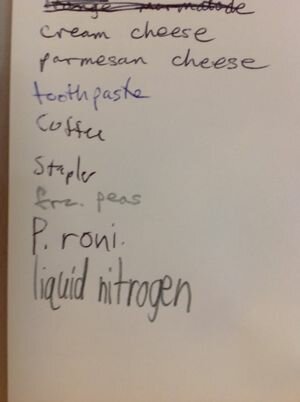

Our Shopping List

Thu, 06/28/2012 - 19:29 — Shivering Timbers Our shopping list this week:

Our shopping list this week:

- orange marmelade

- cream cheese

- parmesian cheese

- toothpaste

- coffee

- stapler

- frozen peas

- pepperoni

- liquid nitrogen

My brother had his 40th birthday party last week. My gift to him was to supply liquid nitrogen ice cream for all the guests. I've been wanting to try LN2 ice cream for years, but didn't know how or where to get my hands on the stuff. In grad school we just had a tank of the stuff in the lab. It was supposed to be for experiments, but a significant percentage was diverted for various graduate-level entertainments.

About a month ago one of the other geek dads at the twins' school served LN2 ice cream at his son's birthday party. The primary reason for enrolling one's children in a Gifted and Talented program is, of course, getting to socialize with geek dads.

I interrogated him and found out that, as long as one has the right equipment (a liquid nitrogen storage dewar), LN2 is easily obtained from your friendly local welding supply store. The nitrogen itself isn't too expensive, though the dewar is a few hundred dollars. On the other hand, the dewar will last for decades if properly cared for, so a one-time investment can mean years of uniquely nerdy party entertainment at a very reasonable price. My brother's milestone birthday gave me just the excuse I was looking for.

Unfortunately, we emptied the dewar before the kids got tired of ice cream. One of the twins (age 10) decided that more LN2 was an essential household supply.

And that is why we are the only family on the block with "liquid nitrogen" on our grocery list.

Now if you will excuse me, I need to go load the dishwasher and check on the cryo-tanks.

Seeking Plastic

Sun, 02/12/2012 - 22:36 — Shivering TimbersMy 3D printer consumes three things: my time, electricity, and miles (*) of plastic filament.

To date, I've been going through plastic at about two kilograms per month. At about $60/kg (including shipping) for the manufacturer's plastic, that's about equivalent to a bad Starbuck's habit--an affordable luxury, especially since I don't otherwise have a Starbuck's habit.

There are two problems with buying plastic from the manufacturer, though: first, it costs $60/kg and I'm cheap. Second, it only comes in white.

So I have been on a hunt for alternative sources of 1.75mm ABS filament to feed my 3D habit. Hobbyist 3D printers seem to have settled on 1.75mm ABS and PLA as the "standard," so there are may sources including other 3D printer manufacturers, third party vendors, and guys who bought a palletload of plastic filament and sell it on eBay.

So far I've tried five different sources of filament and I'm still evaluating two others. I've paid prices ranging from $25/kg (for bulk orders) to $60/kg. And I've discovered that all plastic is not created equal.

Size: To get the best and most consistest results in my printer, the actual diameter of the 1.75mm plastic filament needs to be between 1.70mm and 1.80mm. Plastic as small as 1.60mm and as large as 1.80mm can be made to work with some effort, but diameters outside that range will not feed properly.

So far this has been the largest challenge in finding reliable suppliers. I've bought reels of filament which vary between 1.55mm and 1.95mm over a distance of less than two meters. That's simply not going to work. The result is jammed filament, failed prints, wasted plastic, and frustration.

Of the five suppliers, three have delivered plastic which is consistently in-spec: Up (the manufacturer of my printer), Makerbot (which makes a competing hobby printer), and ProtoParadigm. The guys at ProtoParadigm get extra credit for offering bulk 30-lb spools at a substantial discount, but you have to special-order it and wait a couple months for delivery.

Plastic: It turns out that there are lots of different kinds of ABS plastic. The stuff Up sells is an extra-strong grade which gets extruded at 260C. Most other suppliers offer a lower grade of plastic which they recommend extruding at between 200C and 225C.

So far, every ABS plastic I've tried has extruded just fine in my printer, though with the lower grades of plastic it is often harder to remove support material and there tends to be more warping and lifting from the print surface. If I was trying to get perfect models every time this would bother me, but I consider this a worthwhile tradeoff for having a choice of colors and lower cost.

Colors: If Up offered a choice of colors I probably never would have started looking for other sources of plastic. Right now I have reels of about ten different colors, including glow-in-the-dark and metallic silver (which is more like graphite, but still looks sharp). Printing in color gives much more appealing results than boring old white.

Quantity: Most retail sources sell ABS filament in 1 lb or 1 kg reels, at prices around $45 - $55/kg including shipping. Special colors, like fluorescent, glow, and metallic, often cost a few bucks more. A few carry 5lb reels at a discount. ProtoParadigm lets you special order 30lb reels for a significant discount.

I'm just starting to explore ordering directly from wholesalers. Minimum orders range from 10lb to 25kg, making this a little risky since I don't want to buy a year's supply of plastic and discover it consistently jams my printer. On the other hand, wholesale prices seem to be generally around $20 - $25/kg including shipping, so there's the opportunity to save a lot of money if I can find a reliable source. Wholesale orders also seem to have one to three month lead times.

Stock: Right after Christmas, most sellers of 3D printer filament seemed to go out of stock on most colors. I'm not sure if this is a post-Christmas spike in demand or what, but at the moment it is a challenge to find many colors. I'm hoping that availability will improve over the next month or two as the wholesalers catch up.

My Buyer's Guide for Plastic to Feed an Up

Up: You can't go wrong with the manufacturer's own plastic. Pros: best performance and strength. Cons: comes in white only; more expensive than other retail sources; ships from China so options are limited for quick delivery.